Bio

I graduated with a B.S in Mathematics and M.S in Computer Science from Stanford University where I concentrated my studies within probability and machine learning. I was fortunate to be advised by Amir Dembo for my undergraduate degree and Christopher Ré for my graduate degree. At Stanford, I spent my time exploring a variety of areas with the goal of learning how to levarage my understanding of random phenomena and artificial intelligience to develop exciting ideas and products that will revolutionize our world. I believe that these two concepts can not only guide us to a deeper understanding of the problems that challenge our lives, but also give way to robust, innovative solutions.

I now work as a Deep Learning Applied Scientist at NVIDIA on the foundation model training team. I primarily focus on developing new large language models (LLMs) and augmenting their abilities via continued training.

// In the past, I've:

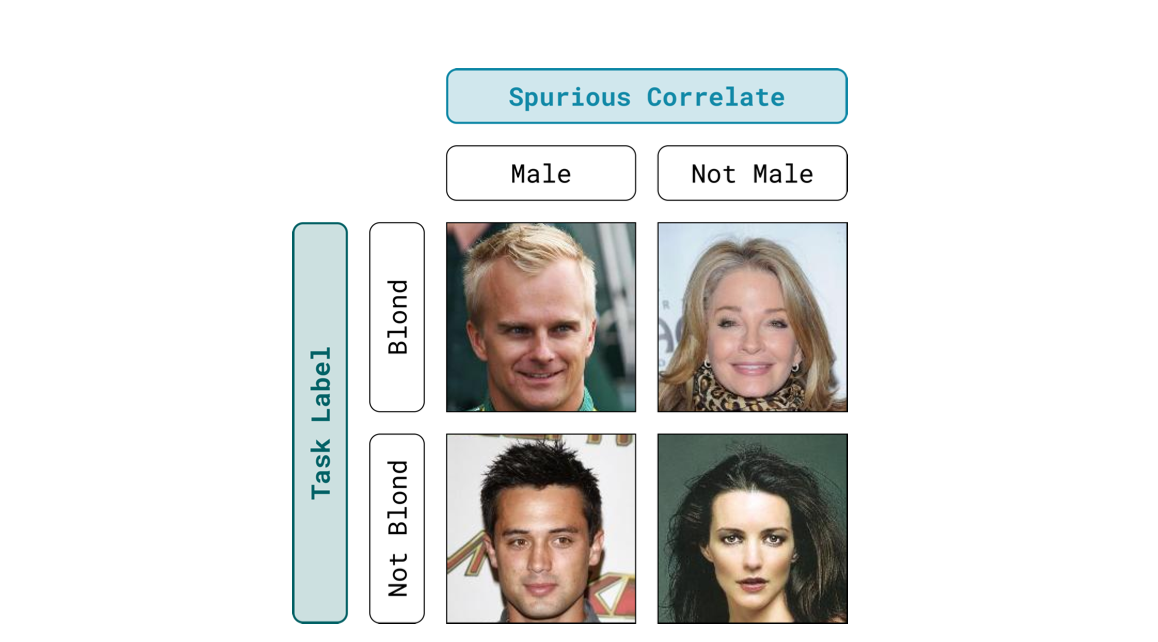

Worked with Tatsu Hashimoto on improving group robusntess of machine learning models. Current models achieve high average performance but can incur high error on certain groups of rare and atypical examples. We developed a new framework which allows for models to obtain better worst-group performance on tasks.

Worked as a teaching assistant for CS 234: Reinforcement Learning where I had the wonderful opportunity to help introduce the exciting field of reinforcement learning to students. Teaching has always been a passion of mine and it was gratifying to help aid in the educational journey of others.

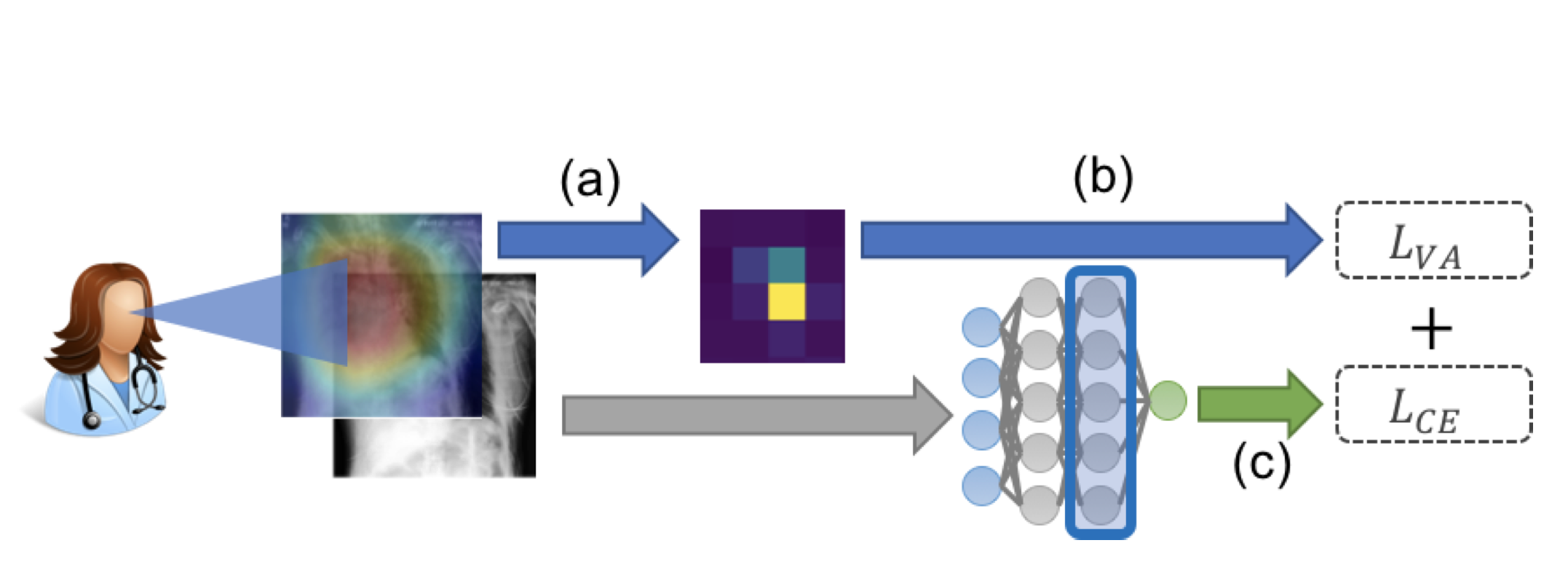

Researched in Chris Re's Lab at Stanford where I worked with Khaled Saab. We worked on making use of passively observed human signals, such as one's gaze, to learn better representations of tasks and help train more robust, generalizable deep learning models for medical image classification.

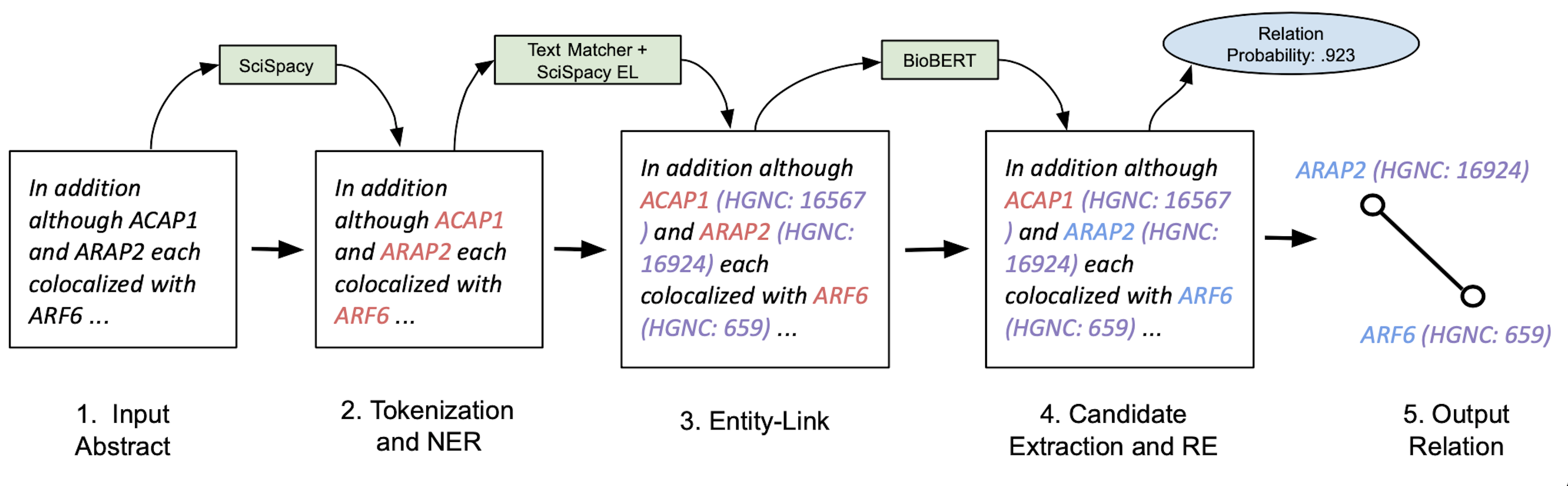

Worked as a Machine Learning Engineer and the first Product Manager for OccamzRazor. OccamzRazor is an early stage company developing a biomedical knoweldge graph which aims to advance understanding of drug efficacy and provide novel treatments for diseases. My initial focus of work was on expanding out their natural langauge processing pipeline by researching and engineering new architectures for biomedical information extraction. Later, I focused on expanding the product platform from just repurposing known drugs to the broader set of small molecules that actively interact with any druggable protein additionally spent time exploring how to make graph learning algorithms more interpretable.

Implemented architectures described in various machine learning research papers from scratch in PyTorch. I wanted to dive deeper into the content I was learning in my classes and found that the best way to do so was to get hands on experience with the intracies of each model. Some of my favorite architectures that I implemented were ResNet and a Neural Image Caption Generation Model.

Worked as a quantitative trading intern at IMC Trading on the Small Index Options Desk. I developed new trading strategies to improve performance on US Options Auctions by leveraging information regarding market sentiment.

Worked as a software engineering intern at SAS on the Enterprise Computing team. I aided in the team's shift to Kubernetes and developed a machine learning model to predict when potential workloads would cause system failures.

Researched with David Chin at UMass Amherst where we investigated the intersection of quantum and classical machine learning. In particuar, we looked to see whether quantum models could help us better assess the quality of care provided by hospitals.

Worked with David Doty at UC Davis where we built a domain specific language that allowed researchers to implement complex theoretical models in the field of algorithmic self assembly.

// Outside of Work I Enjoy:

- Playing chess. The Italian Game is my favorite opening.

- Cooking new dishes. Souvlaki has been my most recent hit recipe.

- Attending concerts and music festivals. Lollapalooza was paricularly memorable.

- Hiking and running. This trail in Lake Tahoe offers some amazing views.

- Deep diving into philosophy. Socrates holds a special place in my heart.

- Reading. My bookshelf is a good way to see whats on my list and what my favorites are.

Projects

Publications and Written Pieces

The Importance of Background Information for Out of Distribution Generalization PDF

Jupinder Parmar, Khaled Saab, Brian Pogatchnik, Daniel Rubin, Christopher Ré

Workshop on Spurious Correlations, Invariance, and Stability at International Conference of Machine Learning (ICML 2022)

Observational Supervision for Medical Image Classification using Gaze Data PDF

Khaled Saab, Sarah Hooper, Nimit Sohoni, Jupinder Parmar, Brian Pogatchnik, Sen Wu, Jaredd Dunnmon, Hongyang Zhang, Daniel Rubin, Christopher Ré

Medical Image Computing and Computer Assisted Intervention (MICCAI 2021)

Biomedical Information Extraction For Disease Gene Prioritization PDF

Jupinder Parmar, William Koehler, Martin Bringmann, Katharina Sophia Volz, Berk Kapicioglu

Knowledge Representation and Reasoning Meets Machine Learning Workshop at Neural Information Processing Systems (NeurIPS 2020)

A Formulation of a Matrix Sparsity Approach for the Quantum Ordered Search Algorithm PDF

Jupinder Parmar, Saarim Rahman, Jesse Thiara

International Journal of Quantum Information (2017)

Resume

-

Nvidia September 2022 - CurrentDeep Learning Applied Scientist

Conversational AI -

OccamzRazor September 2021 - June 2022Product Manager

Expanded the Existing Product Platform -

IMC Trading Summer 2021Quantitative Trading Intern

Developed Trading Strategies for U.S ETF Option Auctions -

Stanford University 2020 - 2022Graduate

M.S. in Computer Science. Advised by Christopher Ré. -

OccamzRazor March 2020 - June 2021Machine Learning Engineer

Worked on NLP and Graph Learning -

Stanford AI Lab November 2019 - nowResearcher

Working on Representation Learning and Domain Generalization -

SAS Institute Summer 2019Software Engineer Intern

Used Machine Learning to Predict System Failures -

Stanford University 2018 - 2022Undergraduate

B.S. in Mathematics. Advised by Amir Dembo.

Acknowledgement

This website draws design inspiration from Tatsunori Hashimoto and Jason Zhao.